Are you accomplishing what your organization set out to accomplish? We know you’re doing important work at Transform Consulting Group (TCG). We also know you are passionate about serving your community. But are you actually doing it? And if so, how do you prove it?

Program evaluation can help your organization determine if the change they set to accomplish occurred. Change can be knowledge gained, attitude change, or behavior change. For example, did a literacy tutoring program helps students not reading on grade level catch up to reading on grade level by the end of the program period? Or, are the low-income children who participated in a high-quality pre-K program ready for kindergarten?

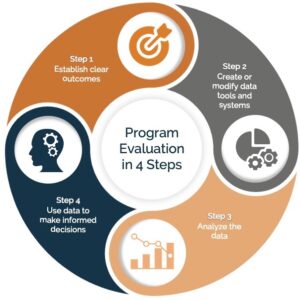

We are data nerds at TCG, and we love helping organizations develop and implement evaluation plans to assess their change using a four-step process.

Program Evaluation in Four Steps:

Program Evaluation in Four Steps:

- Establish clear outcomes

- Create or modify data tools and systems

- Analyze the data

- Use data to make informed decisions

The first step is to ensure there are clear outcomes in place that support an organization or program’s goals. We work to develop SMART results: specific, measurable, achievable, relevant, and timely. In this step, we typically review or create a logic model (a blog focused on producing logic models and a free tool here), which is just a technical description of aligning your programs to the change (or outcomes) you want to accomplish. For example, a college-readiness program may wish to increase the number of students who can (1) identify a major or career they are interested in pursuing after high school or (2) understand how to apply for college financial aid.

The second step focuses on having the right data tools and systems to measure and report on the designated outcomes. We help organizations determine the most appropriate tool(s) to collect, track and monitor the accomplishment of the identified outcomes. When suggesting data tools, we consider organizational capacity (staff time, knowledge, and budget). Some examples include participant surveys, assessments, and student academic records. We work to have data tools that are valid and reliable and will provide the data necessary to monitor progress.

The third step is to analyze the data once it has been collected and present the results in an easily-understood format. Data is measured to determine whether program outcomes were met and the change, if any, that occurred. This is often the step where organizations get stuck because they don’t have the staff time or knowledge to complete the analysis. We tell our clients this is the fun part because we can see if what they set out to accomplish actually occurred!

Some common research questions that drive many organizations to conduct program evaluations to get answers include:

- Are program participants being reached as intended? If yes, why? If not, we also want to know why.

- To what extent are desired program changes occurring? Was there a significant difference or just a tiny difference? Is there a specific group that is not being impacted?

- Is the program worth the resources it costs? What is the “return on investment” for this program or service?

The fourth step is to discuss the program evaluation results and make informed decisions based on what the data tells us. We will compile a summary report and/or slide deck presentation of the evaluation data for stakeholders internally and externally to review the results and discuss the implications. Good evaluations often lead to recommendations for improvement, such as enhanced professional development, diversified participant recruitment strategies, and/or program model changes. This is an opportunity to discuss the data collected and implications for future programming, including ongoing program evaluation practices within the organization.

In today’s era of accountability, what gets measured gets done. If you don’t measure results, you can’t tell success from failure[1]. Transform Consulting Group equips organizations to celebrate their accomplishments and inform growth opportunities. Contact us today for more information on how Transform Consulting Group can help assess your organization’s impact.

[1] Reinventing Government, Osborne and Gaebler, 1992.